|

https://www.compsim.com |

Frequently Asked Questions |

Question:Why do you consider KEEL a "disruptive technology"?

Answer: We have had several telephone discussions and are having difficulty getting people to understand that KEEL is a “technology”; not just a tool component (KEEL Toolkit). While the KEEL “toolkit” is interesting, it is the new application capabilities that KEEL makes possible that defines KEEL’s value.

The KEEL Toolkit is used to create KEEL Engines that process information in a new way. These KEEL Engines allow complex “behavior” to be integrated into devices and software applications.

We would suggest that creating and deploying these “behaviors” using other techniques would be impractical, especially if you want explainable, auditable results.

KEEL needs to be considered for its transformational characteristics:

KEEL “technology” can also transform other complex domains: medical systems and processes, transportation systems, financial systems, economic and political systems…

KEEL gives systems a “right brain” that allows them to deploy judgment and reasoning. So when KEEL is considered, it should be considered for what the systems can do with a right brain, not just the tool side of the technology.

When we hear KEEL referred to as a “tool”, it is an indication to us that someone “just doesn’t understand”. For example: A submarine is a “tool” of war. Undersea warfare became a new way to fight a war when the submarine was invented. Undersea warfare was transformational. KEEL is a transformational “technology”. A transformational technology is a disruptive technology.

Question:Why do you suggest that KEEL Technology is a form of AI (Artificial Intelligence)? And why do you suggest it might be a "better" form of AI?

Answer: It was in the mid-1950s that McCarthy coined the term “Artificial Intelligence” which he defined as “the science and engineering of making intelligent machines”. https://www.artificial-solutions.com/blog/homage-to-john-mccarthy-the-father-of-artificial-intelligence . This is the focus of KEEL Technology: to deliver intelligence (in the form of cognitive engine components that encapsulate judgment and reasoning skills) that is suitable for incorporation in machines. We suggest that KEEL may be a “better” form of AI because humans remain in control. Humans define how the machine thinks. Humans can restrict adaptive behavior. Humans give machines a “value system” on which to base their judgment and reasoning skills. And, KEEL may be a better form of AI, because the behavior of KEEL-based intelligent machines will be explainable and auditable (Explainable AI – XAI).

Question:Why do you call KEEL Technology a form of "Explainable AI"?

Answer: The behavior of a KEEL-based system (decisions and actions) is driven by influencing factors. The KEEL “Dynamic Graphical Language” displays the valued influencing factors. When the KEEL Dynamic Graphical Language is driven by recorded influencing factors (using a technique called “Language Animation”), it is easy to trace weighted decisions and actions to their weighted influencing factors. The information fusion process is exposed without going through hidden mathematical models that are difficult to understand. One can (we suggest) learn how to trace the decisions and actions of a KEEL-based system with just a few minutes of training.

Question: What is the "underlying technology" that defines KEEL?

Answer: There are three concepts that define KEEL Technology (all covered by Compsim patents). Before explaining the three concepts, it is important to understand the focus of the technology. KEEL was created to capture and package human-like reasoning (judgment) such that it can be embedded in devices and software applications. In humans this is a right-brain analog process that focuses on the interpretation of "values" for data items and the balancing of interconnected "valued items". It is the human's ability to exercise reason and judgment that has separated humans from computer programs in the past.

The first (and most fundamental) concept in KEEL provides a way to establish "modified values" for pieces of information. To begin with, a piece of information will have a potential value (or "importance"), just by its nature (what it is) and the nature of the problem being addressed. This piece of information can be supported or blocked by other pieces of information (driving or blocking signals). For example, one might have a task with a series of pros and cons. Neurons in the human brain have Exciting and Inhibiting Synapses. The importance of the task is modified by the pros and cons to determine the "modified value" of the task. KEEL Technology uses a "law of diminishing returns" to accumulate driving signals. It follows this with another "law of diminishing returns" to accumulate the blocking signals. Blocking signals take precedence over driving signals. In control systems this means that emergency stop will override all accumulated driving signals.

The second concept for KEEL technology is that we are (almost) never dealing with independent accumulations of information. Individual inputs may impact different parts of the problem domain in different ways. Inputs may cause the importance of other pieces of information to change. Information accumulated from the integration of one set of inputs may impact how other inputs are interpreted. So the second concept is that KEEL provides a way for information items to functionally interact with other information items. A set of functional relationships are provided that allow linear and non-linear relationships to be executed. Essentially, we have created the functionality of an analog computer.

KEEL "engines" are functions or class methods, depending on the output language selected. Within the KEEL Toolkit there are two optional processing methods available: The first is an "iterate until stable" approach. The second approach pre-qualifies the design and creates an optimal processing pattern for the worst case scenario.

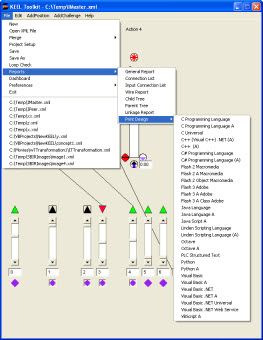

The third concept that defines KEEL is the dynamic graphical language. Because we are commonly dealing with subjective designs (from a human standpoint), there are several advantages of dealing with graphical language. The first advantage is that the designer is learning how to describe his/her system while it is being designed. The designer can stimulate individual inputs and "see" the information propagate throughout the system. The designer can "see" the impact of change immediately. The designer can create 2D and 3D graphs and see how items interact. A KEEL "engine" is automatically created and executed behind the scene as it is being developed and tested using the dynamic graphical language. When the design is complete, it can be exported as conventional C, C++, C++ .NET, C#, Java, Flash, Objective-C, Octave (MATLAB), Python, Scilab, Visual Basic 6, Visual Basic .NET, and other languages for integration with the rest of the production system (including .ASPX and WCF web services).

It is also important to understand that KEEL models are equivalent to formulas. They are explicit (traceable by looking at the dynamic graphical language and observing how individual inputs propagate through the system). Designs can easily be extended by adding new inputs to the system and functionally linking them to other data items. We suggest that rather than dealing with complex mathematical formulas, that the models are much easier to understand by viewing and interacting with the "KEEL dynamic graphical language".

One question that has been asked several times: What came first, the KEEL execution model (concepts 1 and 2) or the KEEL dynamic graphical language (concept 3)? The answer is that concept 1 came first as we created a model for integrating pros and cons when addressing common structured business problems. Then the functional integration (concept 2) and the dynamic graphical language (concept 3) came together as we began to address dynamic, non-linear, inter-related, multi-dimensional problems that we found would be necessary to be solved for embedded autonomous systems.

While KEEL has been developed to provide human-like reasoning that can be embedded in devices, it can also be used to describe the human-like behavior of physical systems. Consider, for example, the degradation of physical systems that seem to have a mind of their own as components wear out and degrade over time or perform differently as they heat up. All of these non-linear relationships can easily be modeled and simulated with KEEL. Once one understands "how" KEEL is used to create and execute this processing model, we often suggest that the user forgets "how it works" and starts "thinking in curves". In this way, the designer thinks about the (often) non-linear relationships between information items, not how the information is actually processed.

Finally, we would suggest that the only way to get an in-depth understanding of the KEEL underlying technology is to work with it directly at a KEEL workshop. In the 2 to 3 day workshop you will gain an understanding of how to create and debug KEEL cognitive engines, how the KEEL engines process information, and how to integrate the engines into systems.

Question: Why do you differentiate Rules from Judgment?

Answer: Rules are (or are supposed to be) explicit. They define explicit behaviors that are to be produced in response to explicit circumstances. In computers rules are commonly described with IF | THEN | ELSE logic or CASE statements. Judgment, on the other hand, is a human characteristic focused on the interpretation of information in order to decide how to apply rules. It commonly requires "balancing" of (sometimes conflicting) alternatives. In human terms, judgment is considered a parallel process performed by the right hemisphere of the brain. KEEL provides the ability to exercise these parallel, judgmental functions on a sequential processing computer. Policies are commonly developed to guide humans in the interpretation of more complex situations. Humans can then use "judgment and reasoning" to react to situations according to those policies.

Another viewpoint was expressed by Dr. Horst Rittle in the 1970s. He coined the terms "tame problems" and "wicked problems". He indicated that with "tame problems" one could write a formula and obtain a "correct" answer. With "wicked problems" one hopes for a "best answer". His field was city planning. He was suggesting that it would be difficult or impossible to write a formula to control a city. We would extend this concept by saying it takes "judgment" to determine how to allocate resources to operate a city.

With KEEL we are allowing devices and software applications to take on some of the judgmental reasoning functions that have historically been impractical to implement with a fixed set of rules. We would suggest that one additional requirement when automating these judgmental functions is that they must be 100% explicit and auditable. One doesn't want to mass produce devices that exercise poor or unexplainable judgment. With KEEL, all decisions and actions are 100% explainable and auditable.

Question: Unless I am missing something, it appears you have implemented an analog computer on a digital computer – that is, you can accept various inputs, scale them, combine them, and provide a scaled output. Let me know what I missed on the engine itself. For example, I didn't see any indication of the ability to support non-linear inputs (for example, thermocouples) or to generate non-linear outputs. I'm trying to figure out what is unique about this.

Answer: Yes, you are totally correct about KEEL representing the functionality of an analog computer. KEEL is intended to model human judgmental decision-making, which is pretty much an analog process. The very small memory footprint of a KEEL "cognitive engine" makes KEEL-based solutions available to some embedded applications, where other approaches would not be practical. Because we are interested in analog “decisions” or “control applications” we can easily handle non-linear inputs and create non-linear outputs (sample graph below). In general, if you already have a specific formula that calculates a correct answer for every input condition then KEEL would probably not be appropriate. On the other hand, if you need to create a system that exerts "relative control" in a dynamic or complex environment, then KEEL might be appropriate. The KEEL toolkit allows the design to be created without writing conventional "rule based" code. The engines created from the design can be integrated into existing applications with very little effort. One other note: KEEL actions or decisions are completely explainable and auditable. This differentiates them from neural nets and fuzzy logic.

One characteristic of a real analog computer is that all inputs are handled simultaneously (or at least determined by the propagation delays through the circuits). Commonly, digital computers process information sequentially. With KEEL, the processing of information is handled within what we call a "cognitive cycle". A snapshot of the system (inputs and outputs) is taken at the beginning of the cognitive cycle. Then, within the cognitive cycle, the system iterates the analytical process until a stable state (of all variables) is achieved. (This equates to the propagation delay through an analog circuit.) Then the cognitive cycle is terminated so the outputs can be utilized.

Think of how a human might make a judgmental decision between a number of inter-related alternatives. The human integrates pros and cons of each alternative and determines how potentially conflicting objectives are met. The human may allocate resources across several of the alternatives or apply all resources towards one alternative. The human will be balancing each alternative against the others to determine how to proceed. This is an iterative process for the human because the selection of any alternative cannot be done in isolation. All alternatives must be considered together. KEEL-based solutions perform the same way. The entire system is processed during the KEEL cognitive cycle.

ALSO: While an analog computer "could" be designed such that unstable conditions could exist, the KEEL design tools highlight and disallow these designs.

The 3D graph above shows the integration of two non-linear functions (2 in and 2 out). With KEEL we are commonly dealing many-to-many relationships that cannot be graphed in just 3 dimensions. The KEEL dynamic graphical language provides another way to "see" and "test" these types of relationships.

Question: How does KEEL differ from Fuzzy Logic?

Answer: KEEL has often been compared to Fuzzy Logic. Both KEEL and Fuzzy Logic can support relative, judgmental, analog values. Fuzzy Logic, however, is difficult to explain or audit in human terms. Fuzzy Logic is based on the concept of linguistic uncertainty, where human language is not sufficient to exactly define the value of words: cold, cool, warm, hot… Geometric domains are used to describe values: the degree of cold is described as participation in the cold geometric domain. Geometric domains are combined to approximate what the data is supposed to mean. The process is called fuzzification. There is art in selecting the appropriate geometric shapes that are used in Fuzzy Logic.

KEEL, on the other hand, defines information with explicit values and explicit relationships. KEEL supports the dynamic changing importance of information, which allows the reasoning model to change with the environment. Complex relationships can be traced to see their exact impacts. It is easy to see and audit the reasoning process. The graphical language can be animated to show decisions and actions at any point in time. This makes KEEL an appropriate choice when the decision or actions of the system need to be explained and understood.

Fuzzy logic also focuses on the interpretation of "individual signals". Additional logic is required to integrate fuzzified signals into a system design. KEEL, on the other hand, is a system processing model. The KEEL "dynamic graphical language focuses on how the "system" integrates information (linear and non-linear).

Questions for your "fuzzy" designers:

Question: How does KEEL differ from Artificial Neural Nets (ANN)?

Answer: While both KEEL and Artificial Neural Nets support 'webs' of information relationships, ANN webs are taught by showing them patterns to recognize. When an ANN-based system makes a decision, it is based on the interpolation between points it was taught. ANN based systems cannot explain why they make decisions. Because they are taught patterns, they have problems recognizing situations that they have not been taught. In other words, they do not react well to surprise situations. Since ANN based systems may not be able to explain why they do what they do, the developers of ANN-based systems may be subject to liability concerns in safety critical systems (if they make the wrong decision due to insufficient pattern training). When new information items need to be included in a neural net system, the entire training phase may need to be repeated. This may be a significant cost and time-to-market concern.

There may be some/many applications where one may desire human intervention to be incorporated into the decision-making process. Intervention may be non-linear. There may need to be several non-linear control signals. With several non-linear control variables in a system it may be difficult to appropriately train ANN-based systems with this type of control. With KEEL, it is easy to integrate human intervention into the decision-making process in order to adjust/modify how the system interprets information.

It may not be appropriate to integrate multiple independent problem segments into a single ANN controller, because “all variables in a neural net are linked, even variables that are totally independent”. This is not a problem with KEEL since the designer determines the relationships.

Absolutes should be handled external to the ANN design (like emergency stop) to insure they are handled appropriately. One doesn’t want an absolute to be determined by interpolation between taught patterns. For this reason ANN approaches may not be suitable for handling "boundry conditions" without external logic. This is not a problem with KEEL technology as all models are explicit; they are not the result of interpolation between taught points.

KEEL webs model the judgmental reasoning of human experts by defining explicitly how information items are valued and how each information item interacts with other information items. KEEL Technology is an "expert system" technology, because models are created by a human domain expert. Because KEEL webs are designed with a set of visible graphical functional relationships, every decision / action can be explained and audited. KEEL based systems are also easy to extend without starting over.

Question: How does KEEL compare to "Machine Learning"?

Answer: Wikipedia: "Machine Learning is a subset of artificial intelligence in the field of computer science that often uses statistical techniques to give computers the ability to "learn" (i.e., progressively improve performance on a specific task) with data, without being explicitly programmed. Machine Learning evolved from the study of pattern recognition that can learn from and make predictions on data."

Stated differently: With Machine Learning, the knowledge contained in the machine learning system comes from the data it was provided. With "supervised learning", a human provides the data from which the machine learns.

With KEEL, the "human" tells the machine how to interpret information. This interpretation of information is completely exposed. Nothing is hidden. The human-defined information interpretation model can easily be reviewed and adjusted if necessary.

One could say that the purpose of Machine Learning is to generate knowledge without the human effort of thinking and understanding (human-outside-the-loop).

The purpose of KEEL is to deliver human-like expertise to machines that is the result of the human effort of thinking and understanding (human-on-the-loop).

Question: How does KEEL compare to "tensor-based machine learning"?

Answer: With KEEL there are no vectors (in the geometric sense or in the programming sense), no convectors, no tensor products, no matrix-manipulation functions, no "artificial neural net-machine learning feedback logic", and no pattern training. In tools like "TensorFlow", there are terms like "features" and KEEL does incorporate the concept of features (or influencing factors) in its syntax. The sliders in KEEL, or faders, are simply there to provide visual and interactive variable pieces of information during development or as a means of auditing behavior of a real-world decision or action in an "after-mission review". These sliders allow the domain expert to interact with the model as it is being created, so the domain expert can "see" and "test" the impact of the variable values as the design is being created. Once complete, there is no user-interface to the KEEL Engine. KEEL uses arrays as means of storing information: inputs (features) and outputs and functional relationships and internal storage. This may be similar to the electrical charge stored in a neuron at a specific location in the human brain. Arrays simply provide the storage and access mechanism. The KEEL engine is just a function to integrate information and provide results. The domain expert is "thinking about influence" and "thinking about how influencing items are aggregated to control outputs" as the design is created. Compsim’s view of AI, or intelligence in general, is the application of judgment and reasoning to solve problems. This is the objective with KEEL. We suggest this is an alternative to following static, sequentially processed rules to solve problems (IF THEN ELSE) that computers have been doing for years. Rules provide scripts to solve specific problems. Judgment and reasoning are generalized processes or guidelines to address problems that have not been specifically encoded in rules. This is an alternative to pattern matching approaches. Since we are not attempting to “build a human”, we don’t think it is important to mimic the architecture of the human brain or exactly how information is stored in the human brain. We separate memory (past biased knowledge), from the instantaneous interpretation of new information with past knowledge (integration of past and new information) to make decisions or exert adaptive control. Our view of judgment and reasoning is that humans establish values for information items (features), and those information items are accumulated (pros and cons) to drive decisions and actions. Decisions and actions at one instant may be different the next as information ages and temporal impacts change. This is what is done with KEEL: "collective processing" of information (not sequential processing and not parallel processing). This may not be the general definition of AI today, but we think many people want the delivery of expertise, and are not interested in how that expertise was gathered (until something goes wrong and what happens needs to be explained). When KEEL-based decisions or actions need to be explained, we use Language Animation to explain exactly how decisions or actions are determined.

Question: How does KEEL Technology compare to Neuromorphic Engineering, also known as neuromorphic computing?

Answer: From Wikipedia:"Neuromorphic engineering", also known as "neuromorphic computing" is a concept developed by Carver Mead in the late 1980s, describing the use of very-large-scale integration (VLSI) systems containing electronic analog circuits to mimic neuro-biological architectures present in the nervous system. In recent times the term neuromorphic has been used to describe analog, digital, and mixed-mode analog/digital VLSI and software systems that implement models of neural systems (for perception, motor control, or multisensory integration).

A key aspect of neuromorphic engineering is understanding how the morphology of individual neurons, circuits and overall architectures creates desirable computations, affects how information is represented, influences robustness to damage, incorporates learning and development, adapts to local change (plasticity), and facilitates evolutionary change.

Neuromorphic engineering is a new interdisciplinary subject that takes inspiration from biology, physics, mathematics, computer science and electronic engineering to design artificial neural systems, such as vision systems, head-eye systems, auditory processors, and autonomous robots, whose physical architecture and design principles are based on those of biological nervous systems."

We would suggest that the Neuromorphic Engineering players are the AI guys that want to build a human: Neuro = neurons, Morphology = organic. Using the above definition to compare: you have to know biology, physics, mathematics, computer science and electronic engineering to do neuromorphic engineering.

Once you understand the KEEL dynamic graphical language, you only need to understand the particular problem you want to automate to utilize the technology. One of our patents covers the deployment of KEEL Engines as VLSI or FPGA circuits. You might want a hardware only solution for very specific problems, especially those that might require extremely high speed. We would suggest that with KEEL, you don't need to concern yourself with the intimate details of every neuron, etc. You focus only on how information items work together to make decisions and exert control. With KEEL, you still have to be aware of your system architecture. With KEEL, one isn't trying to create a human. One is trying to create a machine that is more adaptive to changing information, yet is still operating on policies created by humans.

Question: How does KEEL Technology differ from Agent Technology?

Answer: This is comparing a "How" to a "What". "In computer science, a software agent is a piece of software that acts for a user or other program in a relationship of agency. Such "action on behalf of" implies the authority to decide which (and if) action is appropriate."(Wikipedia). KEEL can add "and how" to the definition. KEEL provides a means of creating, testing, and auditing the behavior of complex information fusion models that can easily be integrated into a software agent. This may be especially valuable if the agent is responsible for interpreting complex information relationships for deployment in real time systems, or where the economics of developing complex models can benefit from rapid development cycles and small memory footprint. The KEEL provision of "auditable control decisions" may also satisfy the demand for some agent-based systems.

Question: How would you compare KEEL to probability based solutions (Bayesian / Markov / etc.)?

Answer: Probability based solutions work well when you can obtain good statistics. This might be the case in static situations, like diagnosing disease in patients where normal values are gathered across a large number of tests. However, it is often difficult to get good statistics, especially on non-linear systems composed of multiple inputs. With KEEL, one uses common sense to define how information is to be interpreted. For example, defining concern about running out of fuel is not really a probability problem. With KEEL, you would model how you interpret your concern for running out of fuel in your decision to pursue some goal. With KEEL you model how you want the system to process information. You do not create answers to specific problems. This way you create a robust solution that can solve a number of problems, not just an individually stated problem.

The thought process for a probability based system focuses on the best way to solve a problem, while with KEEL, one models what the system will do, given a variety of inputs and options.

Sometimes there is a risk in probability-based systems for them to be corrupted by incorrect or misleading probabilities (overwhelmed with too much data and lack of auditability). There may be too much "trust" in the data.

Question: How does KEEL differ from conventional AI Expert Systems?

Answer: Conventional rule-based expert systems use approaches like Forward and Reverse Chaining. Reverse chaining systems start with a solution and work back through all the data to determine whether the solution was valid. This approach has worked for simple decisions when some data might be missing. Forward chaining systems start with the data and try to determine the solution, but suffer from missing information components. Rule-based systems supplied the concepts of confidence factors or certainty factors as part of the math behind the results. These types of systems were commonly used to evaluate static problems where the rules are fixed and the impact of each rule is stable. In many real world decision-making situations rule based systems quickly become complex and hard to understand. Computer programs based on rule based systems are usually expensive to develop and difficult to debug. Furthermore, they can be inflexible, and if changes occur, may require complete recoding of system solutions. Bayesian analysis is another approach that focuses on statistical analysis and may be appropriate when there is an opportunity to gather a strong confidence in probabilities. This approach may not work well in dynamic environments.

Rather than defining specific "rules", with KEEL one describes how information items are interpreted. This is more akin to defining policies than writing rules. When one writes "rules" one has a specific answer in mind. With KEEL, one is describing how the system is to respond to information. KEEL defines policies by identifying information items with a level of importance. Each information item can impact other information items to create a web of information. Components of the information web can be exposed as outputs of the system. The 'expert' (designer) defines the information items, defines how important each is, and defines how they inter-relate, using a graphical toolkit. This map of information defines the policies. Forward and reverse chaining models can be created in KEEL. But, with KEEL, this is done without textual programming. The graphical model allows complex situations to be modeled rapidly and tested before being translated to conventional code. In some cases, KEEL systems can use information derived from Bayesian Analysis, Fuzzy Logic, ANN systems as well as sensors, database driven solutions, or human input.

Within the discussion of conventional AI Expert Systems one might be interested in a comparison of a KEEL Engine and an Inference Engine:

From Wikipedia: "In computer science, and specifically the branches of knowledge engineering and artificial intelligence, an inference engine is a computer program that tries to derive answers from a knowledge base. It is the "brain" that expert systems use to reason about the information in the knowledge base for the ultimate purpose of formulating new conclusions." Taken at this level, one could say that a KEEL Engine would be a form of an inference engine because it uses a human expert's understanding of a problem domain captured in the engine and because it focuses on "reason" or judgment to determine outputs.

However, when one looks deeper into the conventional definition of an Inference Engine one quickly identifies some differences and some similarities. Using the Wikipedia definition and an associated section on Inference Engine Architecture, there is a suggestion that an inference engine is based on a collection of symbols upon which a set of firing rules are executed. Broadly interpreted, KEEL could fit in this definition. Data items, their values, and how they are inter-related control the functionality of the system. The concept of "firing rules" is not used in KEEL. "Rules" are not things that a KEEL designer considers. A KEEL designer is primarily concerned with how information items change in importance and how information items are inter-related. Firing rules indicate an intermediate state is observable and may have some value, potentially during some debugging operation. Tracing wires and observing visually the importance of information allows a broader system view of the problem domain than looking at an individual rule as they fire. The Wikipedia definition suggests that Inference Engines have a finite state machine with a cycle consisting of three action states: match rules, select rules, and execute rules. In KEEL, the designer is not conscious of "states" as it is a more organic concept. With KEEL, conflicting rules are "balanced collections" of data (as in the concept of balancing alternatives). There is also a suggestion that an Inference Engine has rules defined by a notation called predicate logic where symbols are valued information items. With KEEL, the information items (aka symbols) in the KEEL Engine do carry with them value information, but there is certainly no predicate logic notation involved. The KEEL dynamic graphical language provides the notation. Applications where the values of variables change with non-linear functional relationships are a target of KEEL Technology. If one includes in the definition of an inference engine that it includes three elements: 1) an interpreter, 2) a scheduler, and 3) a consistency enforcer, then KEEL is further differentiated.

With KEEL the "expert knowledge" is a definition of how information items (symbols using the above definition) are valued and inter-related. There are no visible "intermediate states" in the execution of a KEEL Engine. KEEL Engines equate to a single "information fusion formula" (that might be very complex) where all inputs are effectively processed simultaneously (as in an analog system where there is no value in contemplating intermediate states as the signals propagate through the system).

It is likely that one "builds" an inference engine. Every one will likely be different. All KEEL Engines (the processing function), on the other hand, are effectively identical (some optimization may be used). With KEEL, the problem definition is maintained in tables. The table data will be different, but not the code. This is a subtle difference that may be most important to embedded applications. It may also be important for organizations that need "certified code", where the cost of certification may be an advantage.

Question: How does KEEL differ from conventional rules engines / rules-based systems?

Answer: In a sense, KEEL could be considered a rules-based system (KEEL Engines compared to rules engines). However, conventional rules-based systems are based on IF | THEN | ELSE discrete logic. These systems work well when dealing with compartmentalized data, because one is dealing with simple comparisons, where the rule either fires or doesn't fire. When one is dealing with more complex relationships (where multiple, non-linear inter-relationships between information items exist), then the IF | THEN | ELSE logic may become very complex and hard to manage. For example, situations where pieces of information are treated differently as the situation changes, or situations that are based on the value of other pieces of information (time and space).

Using conventional rule-based systems, it may be hard to visualize the rule set as a whole, because one is sequentially processing the rules, one at a time.

KEEL, on the other hand, processes all information items "together" in a balancing process. When one creates the model using the "dynamic graphical language", one is interacting with the entire problem set simultaneously. Rules defined with the KEEL dynamic graphical language define how information items are to be interpreted in a dynamic (perhaps non-linear) environment that would be very difficult to define with conventional sequentially processed rules.

With conventional rule-based systems, one may be defining how to solve "a" problem. With KEEL, one is defining how to interpret information in more abstract way that allows the KEEL-based rules to address dynamic problems and define more "adaptive" rules.

Question: How does KEEL differ from scripted AI languages like CLIPS?

Answer: They are completely different for several reasons. First, CLIPS is a toolset and a language. KEEL is a technology that incorporates a processing engine architecture, as well as a toolset and language.

CLIPS is like a number of "scripting" languages that utilize text based "rules". It has the same fundamental IF | THEN | ELSE structure of most conventional programming languages. These languages support a number of services that support what we call "Conventional AI" applications. It appears that one programs CLIPS like any other "conventional" programming language, meaning that one uses an "editor" to write the script. This can then be compiled into an application. At the machine level, one is probably left with (potentially large) methods that are described with the script. Like any scripted computer language, they may suffer from typing errors as well as logic errors. Debugging these applications is often a time consuming activity.

CLIPS is completely different than KEEL. We don't suggest that there is anything wrong with scripted languages or conventional programming tools. We suggest that these scripted languages map to a human's left brain activity. KEEL focuses on "judgment and reasoning" that, we suggest, are more image processing (right brain) functions, than static rule-based functions. KEEL is more like differential calculus, except that it is done graphically rather than with scripted formulas. There is no "text" in KEEL, except for naming data items and the "names" are not used at all in the logic. This means you cannot have a "typing" error. You only have to deal with the relationship issues.

With KEEL one is designing systems where the solution is the analog (relative) control of multiple variables. KEEL focuses on solving dynamic, non-linear problems, where the importance of information items change continually. It addresses inter-related problem sets, where each solution impacts other problems within the same problem domain.

With scripted languages the designer is thinking in terms of defining "rules" of how the system is to operate. It is common for a designer to consider a rule from a static situation. With KEEL, the designer is thinking about how information items interact in the solution of a problem. The designer thinks of dynamic, non-linear relationships (curves). The designer is observing the system balance alternatives. The designer then determines if the performance is appropriate.

Question: Why don't you include a database in the KEEL Engine?

Answer: KEEL Engines are small memory footprint functions (or class methods) depending on the language in which they are deployed. There are no external libraries required. When targeting small, embedded applications a user may choose to use dedicated memory arrays for data storage. In other cases historical data may be saved in external databases. In other cases, no historical records may be needed at all. In software applications the user may already have a database in place. Every different kind of application may have different needs that can benefit from a different database architecture. Since KEEL Engines do not care where information comes from (sensors, human input, local or distributed database, etc.) we leave that option open to the system engineers.

Question: Why do you suggest there is a different "mindset" for the developer?

Answer: We suggest that concept development in KEEL is different from other paradigms. For example: developing a simple computer program: The "programmer" has an idea or is given an idea for a program. He/she may or may not outline the program structure using some kind of graphing technique to show data flow. Then coding begins. The programmer is involved in thinking about how to structure the code to make things happen. The programmer's mindset is focused on symbol names and language instructions and how to use the computer instructions to accomplish the task. Depending on the program, the programmer may build a user interface to test the program. Only then can anyone evaluate how well the programmer accomplished the task.

With KEEL, the "designer" is considering many things when creating the application. The designer is considering:

Using KEEL, all of this is done graphically, so the designer is always involved in the problem (not how to create "code" to express the problem). The designer is constantly "testing" the design as it is created to see how it reacts. When the model is complete, it can be given to the "programmer" to integrate it with the rest of the system.

With KEEL, one is commonly dealing with complex scenarios that many times have non-linear components (the changing importance of information items based on time, space or simply the relationships between other information items). Many times the problems have never before been characterized because of their complex inter-relationships. This means that the designs evolve as they are being developed and the designer clarifies his or her understanding of the problem. The KEEL "dynamic graphical language" greatly accelerates this process. Changes can be made and reviewed immediately. There is no need to translate the idea into "code" where the code has to be debugged before it can be evaluated.

For this reason, we suggest that domain experts (not necessarily mathematicians or software engineers), can create and debug the models for these non-linear systems, greatly reducing lifecycle costs to the user/organization.

Another benefit is the visibility of the solution. If these complex behaviors were encoded using common IF | THEN | ELSE logic, the result would likely be a large monolithic code segment that would be difficult to understand. With KEEL, the model is easily visible where inter-relationships and the instantaneous importance of information can be seen while designing the system. Auditing of decisions and actions is easily available. With KEEL's "animation" capabilities, one can even monitor the reasoning capabilities of systems while they are in operation.

Question:Why do you say that with KEEL, you tell machines "how to think" and how does this differ from "supervised machine learning", or "deep learning"?

Answer: With KEEL, using the KEEL Dynamic Graphical Language (DGL) you give the machine a "value system". By this we mean that that the machine is told how to value its information sources (inputs) and how to define the value or impact of decisions or actions that it can deliver. Using the KEEL DGL one defines how mathematically explicit, abstract information is integrated to control the decisions or actions. While at first this may appear to be a difficult problem, using the KEEL DGL, it is easy to get started and easy to refine. While this might be possible using higher level mathematics (that few could understand), this is easy with the KEEL DGL that requires no math at all. So with KEEL, you tell the machine how to integrate information and you can get immediate feedback on how the system would respond (how it thinks and acts). This equates to writing a complex formula (using higher level mathematics that may not be easily explainable, auditable, or correctable), but with an approach that is easily explainable, auditable and correctable (if necessary). With supervised machine learning and deep learning, you "trust" how the system integrates what it has been taught. You do not know if it has been taught enough to appropriately handle situations it has never encountered before.

Question: How does KEEL compare to curve fitting approaches?

Answer: A common practice is to measure the real world and attempt to create a "formula" from the data accumulated.

This approach is used when there is little (or no) "understanding" of the problem. By "understanding" we mean that an expert doesn't know enough about the problem to describe it in a formula. Data is used to derive a synthetic understanding. This approach may use some kind of curve fitting (pattern matching) approach. One risk to this type of approach is that the measured data may be subject to external influences that may not have been measured or accumulated in the dataset. This results in "garbage in - garbage out" types of problems. If this happens, one may not even recognize that there is a problem. It is often difficult to trace back to how the data was gathered and accumulated.

With KEEL, one uses a human's understanding (even if it is limited), to model the system. The dynamic nature of the KEEL language helps the human test the model under various scenarios by stimulating inputs and observing the response. This is an iterative process as the human fine-tunes his/her understanding of how the information is to be interpreted under varying situations. One could say that it is even an evolutionary process, since the models are likely to be refined over time to take advantage of new information items and new control variables. KEEL focuses on creating models that will get better and better over time. The human "learns" how to describe his/her understanding as the models are being created and refined. The result is a visually explicit model that allows one to "see" how data items are interpreted at any instant in time. This visually explicit approach allows the models to be challenged. This is all part of the evolutionary process. In some cases, KEEL-based systems can be designed to evolve on their own. These systems can use a variety of techniques to incorporate adaptive behavior.

Policies (for humans or for machines) described in KEEL are explicit, compared to those described with a human (verbal or written) language, where most terms are subject to individual interpretation.

Question: How does KEEL support the definition of a "functional compiler"?

Answer: A compiler absorbes "source code" and translates and optomizes that code into another form. The source code is the KEEL Dynamic Graphical Language that makes it easy for a human to define and test complex (dynamic, non-linear, inter-related, multi-dimensional) problem sets without the need to understand higher-level mathematics or be skilled in programming. The KEEL Toolkit translates this graphical language into conventional programming languages. So the KEEL Toolkit is doing the heavy-lifting. The KEEL Toolkit also provides a decompiling features with a process called "language animation". It can do this in real-time, so a user can "watch a system think".

Question: Does KEEL learn?

Answer: The term "learning" is subject to many interpretations in the cognitive domain. We would say that KEEL technology adapts, rather than learns. We would suggest that a system that learns will have to be able to automatically accept new information (inputs) that it has never seen before and automatically determine how that new information relates to and impacts all of its existing information. KEEL technology is an expert system technology that requires a human expert to define the reasoning model. The human expert must define how each input relates to all the other pieces of information and what outputs are to be created. In this way, the human expert defines how the system will adapt to changing inputs. The human expert is in control.

We would suggest that a true learning system will decide, on its own, how to integrate new information sources. A human might be termed a true learning system. At birth, the human knows nothing (from a judgmental reasoning standpoint). The baby is exposed to new information and evolves to adulthood. Some adults turn out good, and do good things; and some turn out bad, and do bad things. We would suggest that this evolutionary process is not what we want in machines that are mass produced. There would be the potential of creating devices that evolve differently and perform differently. This could make them uncontrollable.

Auditable Teachability: We would suggest that KEEL Technology provides services to create "teachable" systems. A number of services and techniques are built into the KEEL Toolkit that provide system engineering tools to support systems that need to be periodically updated and extended.

There may be applications, however, that can benefit from the incorporation of true learning abilities. It may be possible to use KEEL engines as policemen to oversee this type of system. This is an area of potential research.

So our answer to the question as to whether KEEL learns, is NO. KEEL can adapt, if that is what the expert wants it to do.

Question:How do you differentiate "adapting" from "learning" (a machine that adapts, versus a machine that learns how to perform), and why should you care?

Answer: We call KEEL a platform for building adaptable solutions, because the policy maker defining KEEL behavior is defining (limiting) how the machine will adapt to changing influencing factors. The human is in control. Machine learning systems simply interpolate between taught patterns. One can state that humans "learn" throughout their life. And they "learn" how to adapt. But humans all learn differently, and they are likely to all adapt differently. This may be okay for evolution, where some humans learn to do some really ineffective / unacceptable things that can change the course of history. This may not be okay for mass produced machines that could learn (on their own) what is right and what is wrong.

Question: Is KEEL scalable?

Answer: KEEL is scalable in multiple ways. First, a single KEEL "engine" (the encapsulation of a KEEL design), uses long integers to provide identifiers for inputs and outputs, so the number of inputs and number of outputs is limited to the size of a large integer on the target platform that is chosen. This would be a physical limit to the largest size of a "single KEEL engine. Realistically, however, we just say that the size of a KEEL "engine" is unlimited, because it would probably not be "practical" to create such an engine. It is more appropriate to break "large" problems into manageable partitions. If these "chunks" are to be processed on a single microprocessor (computer), then we have system integration tools that will automatically wire multiple engines together (so they can be compiled together).

Sometimes the discussion of scalability is related to "performance". Performance is related to complexity, both in 1) the number of inputs and outputs (Positions and Arguments in KEEL Terminology) in the KEEL design, and 2) the number of functional relationships between data items (wires in KEEL Terminology). The more complex the system design, the longer it takes to process. KEEL Technology supports 2 processing models: Normal Model: slightly larger, because it contains 2 additional tables. It operates slightly faster; and Accelerated Model: slightly smaller, because it doesn't contain 2 additional tables. It operates slightly slower. The speed difference is dependent on the type of problem being solved. For example, a problem with lots of inputs that "all, or mostly all" change constantly, would benefit from the Accelerated model, while if the same problem had only a few inputs that changed the Normal model may operate faster. The Accelerated model will operate with less jitter.

On the very small scale, KEEL "engines" are optimized to the extent that services that are not required by the cognitive design are left out of the conventional source code. This addresses the need for embedding cognitive solutions in very small devices (e.g. sensor fusion). This may reduce the approximately 3K even further.

We also say that KEEL is architecture independent. Here we are talking about how KEEL "engines" are distributed. Because KEEL engines operate independently (at any instant), they operate just like a collection of humans, each with their own responsibilities. Some kind of network infrastructure can tie them together to allow them to share / exchange information. Each entity (human or KEEL engine) uses the information available at the time the information is processed. While we do have tools to integrate multiple KEEL engines together on a single microprocessor, we do not supply tools to integrate information across a network. There are various commercial and proprietary solutions to handle this infrastructure and KEEL should be able to be integrated into any of them.

Question: Is KEEL suitable for upgrading existing systems, or only for integration into new systems?

Answer: Most certainly KEEL is suitable for integration into existing systems. It has a very simple interface (API) that allows KEEL cognitive engines to be integrated into any system. All that is required is to load normalized inputs into an input table and make a call to the "dodecisions" function (the KEEL Engine). Results are then pulled from an output table. Sample code to package this entire process into a function is autogenerated with the code itself to accelerate even this simple process. So assuming that one is capable of adding a simple function to an existing design, it should be possible to integrate KEEL "Engines" into existing designs.

For totally new systems one can accelerate the overall development process by allowing domain experts to develop operational code describing "behavioral functionality" and allowing the system engineers to focus on system architecture and infrastructure issues.

Question: How can you model physical systems with KEEL?

Answer: While we know that machines and physical objects don't have a mind in the sense of a human mind, they sometimes exhibit human-like behavior. For example, your car breaks down at the most inopportune time. It is as if the car knew when and how to cause aggravation. A rain storm causes a leak in the roof, but the damp ceiling is visible in a completely different location. It is as if the water had a mind of its own to search for a path to the ground.

In these and other similar cases, elements of the system are changing. There is a balance at any instant in time between all elements. There is a certain wear of mechanical components. There is a certain set of pressures that are exerted at any instant in time. The pressures cause change to the physical characteristics over time. With KEEL, it is easy to model the behavior of these pressures. One can visualize impacts to physical structures. If you have good information on the driving and blocking factors you can model the interactions. This approach can be used for diagnostics and prognostics modeling and adaptive control. Devices can then react to degraded components and they can react to external influences, all on their own.

Question: Why do you call KEEL a "technology" rather than "tool"?

Answer: We define technology as a "way to do things" (as in a technical approach) and a tool as something that "facilitates the implementation of the technology".

We call KEEL a 'technology' because it is more than just a tool. It is a new way to process information. A MIG welding torch is a tool. With it, you can create a wide variety of mechanical structures. The KEEL Toolkit (with the dynamic graphical language) includes a set of tools used to create KEEL 'Engines' which encapsulate the new way to process information. The KEEL technology 'patent portfolio' covers the basic algorithms, the architecture for the KEEL Engine, and the KEEL dynamic graphical language. Licensees to KEEL Technology get access to the toolset and the right to embed the KEEL Engines in their devices and applications. Licensing KEEL Technology would be like licensing the right to use a MIG welding torch to build mechanical structures composed of MIG welded components. NOTE: A MIG (metal inert gas) weld is a specific type of weld. A KEEL Engine processes information in a specific way.

Question: What do you do if you don't think your domain experts "think in curves"?

Answer: While we suggest that when one designs a KEEL system, one "thinks in curves" (because we are commonly focusing on non-linear systems); one almost never starts with this level of complexity. Non-linear relationships between data items are usually an evolutionary enhancement to a design. The process of developing KEEL solutions is to first identify the outputs (or the items that can be controlled). Then the inputs to the system are added (the items that contribute to controlling the outputs). Then the linkages between items are added with wires. These relationships are almost always linear at the beginning. Remember this is done in seconds as "positions and arguments and wires are just dropped on the screen". Then (maybe) the importance of different inputs is adjusted. Maybe several inputs need to be combined before controlling another output. KEEL designs are extended. The designer drops functionality into the design and tests to see that it performs.

This is an iterative (design, test, modify) development process. Non-linear relationships are gradually incorporated into the design. So, while we suggest that domain experts "think in curves", this is just a natural progression of the design refinement process. It is also easy to integrate and test these types of relationships. When one goes through KEEL training, the concept of "thinking in curves" is taught so the user gets experience creating these types of systems.

In some cases, linear or state change control is satisfactory for production systems. But in other cases, as the design is refined, it is obvious that some kind of non-linear relationship (curve) might be appropriate. These can be merged into the design from the library of curves supplied with the toolkit, or they can be created by the domain expert and integrated into the design.

Question: If a KEEL-based system is based on a human expert's opinion, what do you do if there is more than one expert and they disagree?

Answer: It is true that the rules developed with the KEEL Toolkit mimic how an expert models the reasoning process. When there is more than one expert, there is the possibility (likelihood) that there will be some level of disagreement. However, a company or organization or individual still has to take on the responsibility of deciding on a course of action (which expert to trust). The same is true with a KEEL design. Someone still has to take responsibility of choosing which expert is correct, or at least deciding on how to integrate the opinions of both into a final decision or action.

While KEEL Technology will not make that decision for the system designer, the graphical language exposes all of the reasoning. When experts describe their decision-making reasoning using the English language (or another verbal or textual language), one is usually left with only a loose definition of the reasoning that was used. In other words, the English language is not very good at describing dynamic, multi-variable, non-linear, inter-related problem sets. This is especially true of safety critical systems or manufacturing systems. This is why these types of systems are commonly described with conventional computer languages.

So our response to the question focuses on why it is necessary to describe a human expert's judgmental reasoning with a graphical language. This graphical language is explicit. It can be reviewed, tested, and audited. In this manner, when there are disagreements between experts, the model can be investigated and refined over time.

Question: How can you use KEEL if you don't know what the outputs and inputs are?

Answer: In complex problem domains it is common that you don't know all of the inputs and outputs up front. It is likely, however, that you can identify at least some of the outputs. These will be the control variables that you will use to respond to your problem domain. Given the output variables you know, you should be able to identify some potential inputs. A key attribute of KEEL Technology is that it is so easy to add inputs and outputs to the model using the KEEL "dynamic graphical language". This "ease of use" characteristic should help you "think" about how the inputs and outputs collectively inter-operate in your problem domain.

Another characteristic of "solving" complex problems is that the solution may evolve. You may add new sensors, whose data can participate in the solution. You may identify a new "symptom" that impacts the overall goals of the system and needs, either to be controlled or taken into account.

The KEEL dynamical language helps one think about solving the problem and gives you the opportunity to stimulate inputs and visualize the results. Addressing these kinds of problems with KEEL Technology (language and execution environment) is much simpler than attempting to describe hypothetical problems to a mathematician and getting his/her solution implemented using conventional software techniques, where it can only then be tested.

In summary: When you are not sure of the inputs and outputs, it is easy to hypothesize on what they might be and how they might inter-operate with KEEL Technology. When you are comfortable with the results the model can be easily deployed in more advanced simulations and emulations and finally delivered in a product or service. When you have defined and documented your model in KEEL it provides an explicit explanation that can always be reviewed and extended with ease.

Question: How are the concepts of "surprise" and "missing information" handled by KEEL Technology?

Answer: One of the problems with ANN-based (Artificial Neural Net) solutions is that they do not react well to surprise. ANN-based systems are pattern matching systems that are taught. If they have not been taught a particular pattern of inputs, they will just interpolate between what they have been taught and create an answer. With KEEL, one describes how to interpret information, not patterns. There is no interpolation between taught points. With KEEL, one can create systems that decide how to respond to collections of abstract information points. There is no interpolation or undetermined action.

The concept of "surprise" can be decomposed at multiple levels. First, one can encounter scenarios with a known set of inputs combined in unexpected ways. With KEEL, one can examine the system and observe how it was interpreted. If changes are needed they can easily be made. With ANN there is no way to know "how" an answer was derived, so it can only be addressed with increased training.

Another way of decomposing "surprise" is for a system to encounter new variables that impact the problem domain that were never before considered. This would be like a "person" walking down the street and a Martian appeared from behind a bush. An ANN-based system will respond, although it is not clear how. We have some ideas of how one might build a KEEL-based system that would know how to handle this kind of surprise and would like to discuss this with selected organizations. The abstract "Considerations for Autonomous Goal Seeking" identifies the project.

"Missing information" can be handled in several ways. A piece of information may be necessary to make a certain decision (information that supports a decision or action). If it is missing the decision could be blocked. This would be a forward chaining approach. For example: A decision must be supported by A, B, and C. Alternatively, a piece of information could be used to reject a decision or action. In this case, the designer could create a model where missing blocking information would not block the decision or action. This second option would provide a reverse chaining approach. Complex subjective decisions are commonly a fusion of supporting information and potential blocking information. For example: an analysis of an image could identify an object with numerous features; among them aircraft wings and an aircraft tail. The interpretation "could" demand that both wings and tail are required to know it is an airplane, or it could justify the decision if either aircraft wings or aircraft tail are observed. The interpretation "could" exclude a decision that it was a car if the object had either wings or a tail or other features not belonging to a car.

Question: Isn't the KEEL graphical language just another way to create a formula?

Answer: It is true that the KEEL graphical language is translated into conventional computer languages for processing, which could possibly be expressed as a textual/numeric formula. However, when the problem is a multi-variable, multi-output, inter-related, non-linear, dynamic system, the development and testing of such a numeric formula (using the common definition of a mathematical formula) would be difficult (very difficult)! Using the KEEL graphical language and the dynamic support built into the KEEL Toolkit, these systems are relatively easy to construct and test. Also, because the KEEL language can be deployed into several different conventional languages, it is easy to build test systems (simulators or emulators) to perform extensive system tests before deploying them in production environments. So the answer is "yes" (formulas are created), but because of the complexity of the formulas, they can best be visualized in the KEEL graphical language.

Because another benefit of using KEEL Technology is the ability to audit complex systems, the technology supports reverse engineering of actual actions. By importing snapshots of real-time input data into the design environment, one can "see" the reasoning performed by production applications. This is accomplished by viewing the importance of individual data items and tracing the wires to view inter-relationships.

One additional advantage in the use of KEEL Technology and the KEEL graphical language is built into the KEEL Toolkit. It constantly monitors for unstable situations and warns the designer if such a model would be created. Because KEEL Technology balances information, it would be possible to create a system that would never stabilize (go left - go right - go left - go right....). The design environment watches to insure the designer does not create this kind of system. Should one attempt to develop this type of a system manually (hard coded formulas), additional tools may be necessary to insure that an unstable design is not created.

Question: How does the KEEL graphical language differ from other "graphical languages"?

Answer: Most other graphical languages use wires or arrows to show data flow or logical processing flow between graphical components. These are sometimes categorized as "directed graphs". The graphical components themselves commonly encapsulate functionality. This is completely different than the KEEL graphical language. With KEEL Technology, the functionality is defined by the wires between connection points on the graphical icons. The functionality defined in this way is explicit. Within KEEL designs, there is no "hidden" processing within boxes. While there is a functional ordering from source connection points and sink connection points (information provider to information user) this is a functional definition rather than a data flow process. The wires define how one data item impacts others. During the "cognitive cycle" all relationships are evaluated. This is similar to processing a formula, where one is not interested in what happens part way through processing the formula. One is only interested in the results of processing the formula. Within the KEEL graphical language the bars represent values, not functions. This is somewhat similar to "microcode" that would be processed behind the scene in a microprocessor during an instruction cycle.

One can also look at most other graphical languages as a decomposition of a design into pieces that can be viewed as separate functions that are processed sequentially. Information "flow" is critical to their understanding. With KEEL the concept is that all items are processed during the same time frame (the cognitive cycle). Order is not important to the outcome and intermediate states are not important as they are not visible to the outside world.

Another view of most other graphical languages is that they can perform (or encapsulate) conventional computer code that has the capability to perform mathematical functions, like 1 + 1 = 2. The KEEL language has no mathematical functions. It has no conditional branch instructions. It can, however, identify the most important value. It does provide ways for one item to influence other items in non-linear ways. Conventional logic (external to the KEEL Engines) is still used to perform mathematical functions. This is similar to left and right brain functionality. Conventional logic is used for left brain functions and KEEL is used to perform (subjective, image processing) right brain functions.

Simulink with Matlab (from Mathworks) are examples of a graphical language with Matlab encoded functionality inside of Simulink graphical objects. IEC 1131 (IEC 61311-3) graphset is another similar system. The KEEL Toolkit can be used to create designs that can be translated to Octave (the open source version of Matlab source code). The KEEL Toolkit can also be used to create "PLC Structured Text" that can be integrated into IEC 1131 function blocks. KEEL focuses on creating models that would be deployed inside the Simulink graphical objects or IEC 1131 function blocks.

We have been asked whether one could create the KEEL "dynamic graphical language" with Matlab / Simulink. The answer is potentially yes, as the KEEL Toolkit, with its dynamic graphical language objects could be created with any software tools capable of creating graphical objects, drawing wires that tie the objects together and constructing the KEEL functionality behind the graphics. However, the KEEL "dynamic graphical language", the way that KEEL Engines are structured and how they process information are all covered by granted US patents. So to create the KEEL Toolkit (KEEL dynamic graphical language) would require a license from Compsim to do so.

Question: How does your "tool" compare to "LabVIEW" (National Instruments) or other similar HMI (Human Machine Interface) tools?

Answer: The person asking this question might be focusing on the Look and Feel of a graphical tool rather than the target implementation.

For example: The purpose of LabVIEW is to create an interactive view of a process. The primary purpose of the KEEL Toolkit is to create an executable cognitive function that can be embedded in an application, with the added benefit of allowing the application to show how all of the information in the application is actually being processed. LabVIEW is intended to expose only certain values from a process that some engineer decided was of interest. It would be hypothetically possible to duplicate the rendering of the KEEL language in LabVIEW, but this would exponentially increase the cost of managing the entire project, since no HMI development would be necessary with KEEL. On the other hand, LabVIEW (or any other HMI display) could be driven by an embedded KEEL Engine, if that was desired.

One response is to ask if the solution they need targets an embedded platform or architecture. Example: a KEEL Engine (created with the KEEL Toolkit) could be targeted for deploying in an Arduino microcontroller for a micro-UAV. A micro-UAV may have multiple KEEL Engines (or small functions for adaptive command and control). Would you consider using LabVIEW (or any of the other HMI tools) to create embedded, adaptive command and control functionality? We suggest the answer is "no". On the other hand, there may be some value in embedded KEEL functionality inside of a LabVIEW application. KEEL's non-linear, adaptive functionality is more cost effective to develop. Using the KEEL Toolkit a KEEL Engine design could be saved as a C function and integrated per National Instruments sample: https://decibel.ni.com/content/docs/DOC-1690 Similarly we have an Application Note that describes the process of inserting KEEL Engines into GE's GE-PACSystems™ that is built into their RLL programming environment: https://www.compsim.com/keel_app_notes_available.html

Another response is to suggest that LabVIEW provides a "solution", or a complete deliverable package. The KEEL Toolkit is used to create a function that provides only a component of a solution (a cognitive function to think about a set of inputs and determine what should be done about them). This equates to a formula (just one formula that would be very difficult to develop without the KEEL Toolkit). Applications could contain one or many KEEL Engine "components". KEEL functions (KEEL Engines) would be "called" by the broader application software when the KEEL information fusion functionality was required.

It is important to remember that with the KEEL Toolkit we are attempting to provide a way for a domain expert to explore and test a desired behavior before committing to a delivered solution. Much of the focus will be on establishing the "value" of pieces of information in a dynamic adaptive setting. This is accomplished with the KEEL "dynamic graphical language". It is common for this work to evolve as the model is developed and the interactive "visual value system" is refined. The domain expert will likely not know the values in advance.

It may be more appropriate to compare the KEEL Toolkit (tool) to MATLAB / Simulink where the target is a "function" that could be integrated in a target application. See "How does the KEEL graphical language differ from other 'graphical languages?'".

Question: What types of problems are best suited for a KEEL solution?

Answer: KEEL can be used to solve problems

- Where human experts are required to interpret information to make the best decisions or take the most appropriate actions

- Where devices must operate autonomously and make judgmental decisions on their own

- Where devices are required make control adjustments / decisions when human operators are not present

- When repetitive human judgmental decisions are prone to error

- Where trained operators are potentially tricked into overlooking critical attributes

- Where human experts take too long to make judgmental decisions

- When the judgmental decisions of the expert system must be explained (when it is important to know why actions were performed.)

- When it is not economical to develop and maintain straight line code (IF, THEN, ELSE) because the problems are complex (non-linear systems)

- Situations where the environment is dynamic, and the importance of information changes, and the system must react to change

- Where there is an advantage to be able to create one design and execute it on multiple platforms: device, software, web

- Where the small memory footprint of a KEEL solution is an advantage

- Where architectural issues may prohibit other solutions (KEEL technology is architecture independent)

- Where there are many complex models to be created and "ease of use" / "rapid development cycles" are required.

Types of management decisions:

Some example decisions and actions commonly allocated to management include:

- Prioritize / Re-allocate / Re-direct

- Do / Don't Do (choose between separate options)

- Expand / Downsize (Add / Remove)

- Reward / Punish

These types of decisions and actions require that management has the capability to understand and measure pieces of information in order to respond.

Question: How does it (KEEL) work (in systems)?

Answer: Just to review: KEEL is a “technology”, or a new way to put explainable and auditable judgment and reasoning into devices or software applications. The “how it works” is encapsulated in KEEL Cognitive Engines. These are software functions or class methods, depending on the programming language used. The KEEL “engines” are automatically “coded” in the language of choice and given to the user in the form of a text file. For object-oriented languages, a class with a few methods is created. For non-object-oriented languages, a few functions are created. There are two versions of these classes or functions that work slightly differently, but yield the same results.

Before discussing the functionality, it is important to review how judgment and reasoning are different from simply following rules. Humans dispense their expertise by being able to evaluate alternatives in order to control decisions and actions. This is a mental balancing act. The human brain is an analog machine that is integrating and balancing driving and blocking factors in order to make decisions. In complex decisions the human brain is not only interpreting the value of information for its impact on different alternatives, but it is evaluating the information for its age, for trust, for its impact on linked problems, and for its impact on tactics and strategies. It is not simply determining what to do, but also how, how much, when, and where. Judgment and reasoning are often applied when multiple problems, some with conflicting characteristics, need to be addressed simultaneously. It may not be acceptable to address one problem now, without considering its impact on the future. Compare this to rules, where rules are explicit: If this then do that.

Back to “how does it work?”: The KEEL Engine has one primary function: to go think. In one processing model available to the user, a snapshot of the inputs (influencing factors) is given to this function to go think. Within the KEEL Engine, the information is processed until a stable system is achieved. This is where the analog-like balancing act is performed. When all inputs and outputs are stable (there is an internal feedback loop in process), the function exits and the outputs (decisions and outputs controlling actions) can be executed. KEEL “Tools” make sure that the problems are solvable, before creating the KEEL Engines.

The other processing model has the KEEL design tools doing a little more work. In this case the KEEL Tools determine an optimal processing model. So rather than iteratively processing information until stable, one additional table is created that defines the optimal processing path. The system cost is one additional table, and the potential benefit is a somewhat faster solution.