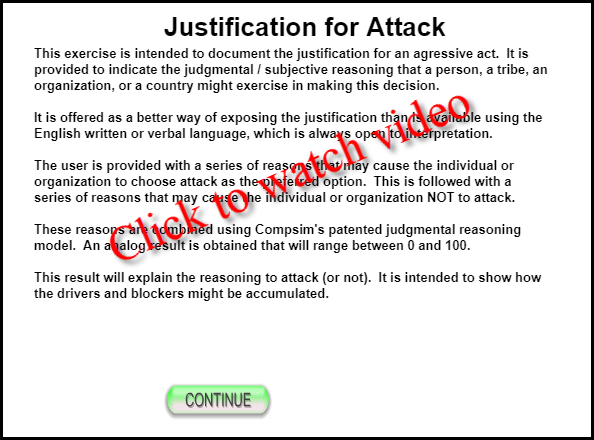

This demonstration allows the user to define the significance of a number of reasons to make a pre-emptive military attack. While it focuses on a military attack, the reasoning model might be applied to other types of aggressive behavior. It can be used to address individual, organizational, tribe, or country decisions. The intent is to document the justification in a less ambiguous manner than is often provided with the human written and verbal language.

A second concept being demonstrated here is the judgmental reasoning the might ultimately be deployed as a robotic warrior in line with concepts developed for Future Combat Systems. In this light, robotic weapons will be required to make judgmental decisions, similar to those made by human warriors today. Because these robotic weapons will be mass produced, it will be mandatory that their decisions are explainable and auditable. Part of the auditing process will include an understanding of how the policies that define how individual information items will be interpreted. A second part of the auditing will include the data items (sensor data) themselves. This demonstration focuses on the first part of this analysis.

The final "relative judgmental value" is only that: a relative reason. If this value is above the threshold, then the decision would be to move forward.

An alternative use of this kind of technique may be to compare a series of actions by a particular person, tribe or party, and use this information to gage their future action. In other words, use it to estimate where the threshold might be.

As the market for autonomous and semi-autonomous devices expands, these devices will begin to take on more subjective responsibilities. These judgmental decisions have been the domain of humans in the past.

Humans perform their duties according to a set of policies, or "rules of engagement", that are intended to set guidelines for their actions.

This demonstration suggests that, when asked to explain reasons for actions, the human written and verbal language fails to provide sufficient detail for an adequate audit. When these types of decisions are allocated to devices, we suggest that this loose coupling between actions and justification is not acceptable, especially when safety critical systems are involved. We suggest that a graphical language, as is provided with the KEEL graphical language, can allow complex dynamic decisions of autonomous devices to be completely explained and audited.

While this demonstration does not show the complex policies that can be developed with KEEL Technology, it attempts to show that the graphical weights given to the various reasons provide significantly more insight into the justification for an action (a pre-emptive military attack in this case). We suggest that this is far superior to the human written and verbal language alone.

See Compsim's Paper titled: Auditable Policy Execution in Autonomous and Semi-Autonomous C2 Systems to Support the Next Generation Warfighter

|

Copyright , Compsim LLC, All rights reserved |